"No Man Left Behind": American Technology Ships with Our Values

China is already building autonomous weapons at scale. The question is whether America builds them first, or cedes the rules of war to Beijing

America | Tech | Opinion | Culture | Charts

When DUDE44, an American F-15, was downed over Iranian territory, the United States expended considerable resources—logistics, risk, cost—to recover the stranded Americans. Not because the math favored it in any narrow operational sense, but because as Americans we believe in “no man left behind” as a tenet.

This deeply held belief—that we are a nation that does not leave our warfighters behind—shapes our R&D agenda and our military capability stack. It shapes what war is, when we choose to fight it. The engineering requirements of “how do we bring them home” are a technical constraint for us, and they are the good kind of constraint: one that links our tools and our values to each other.

Our commitment to “no man left behind” matters a great deal in how we prepare for autonomous warfare. The moral case for autonomous warfare is not despite our values, but because of them. A democracy that refuses to replace soldiers with machines when machines can do the job is breaking its covenant with the people it sends to fight. America must now build autonomous systems at both the quality and quantity required to win. We have the talent advantage. We are losing the production race. The stakes are whether the United States arrives at the next conflict with overwhelming superiority in autonomy, or whether we cede that advantage to adversaries who are already building it.

From the Stick to the Swarm

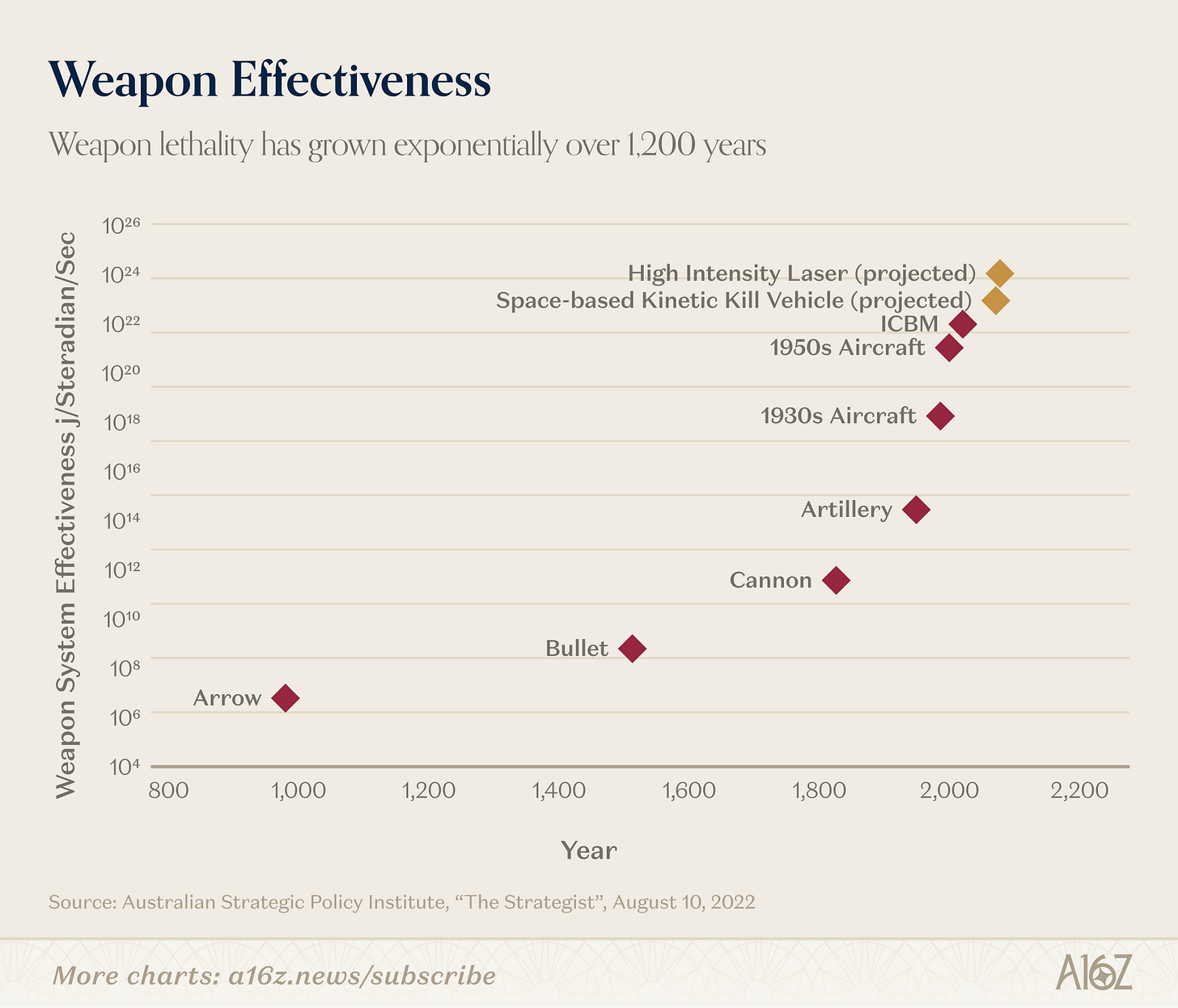

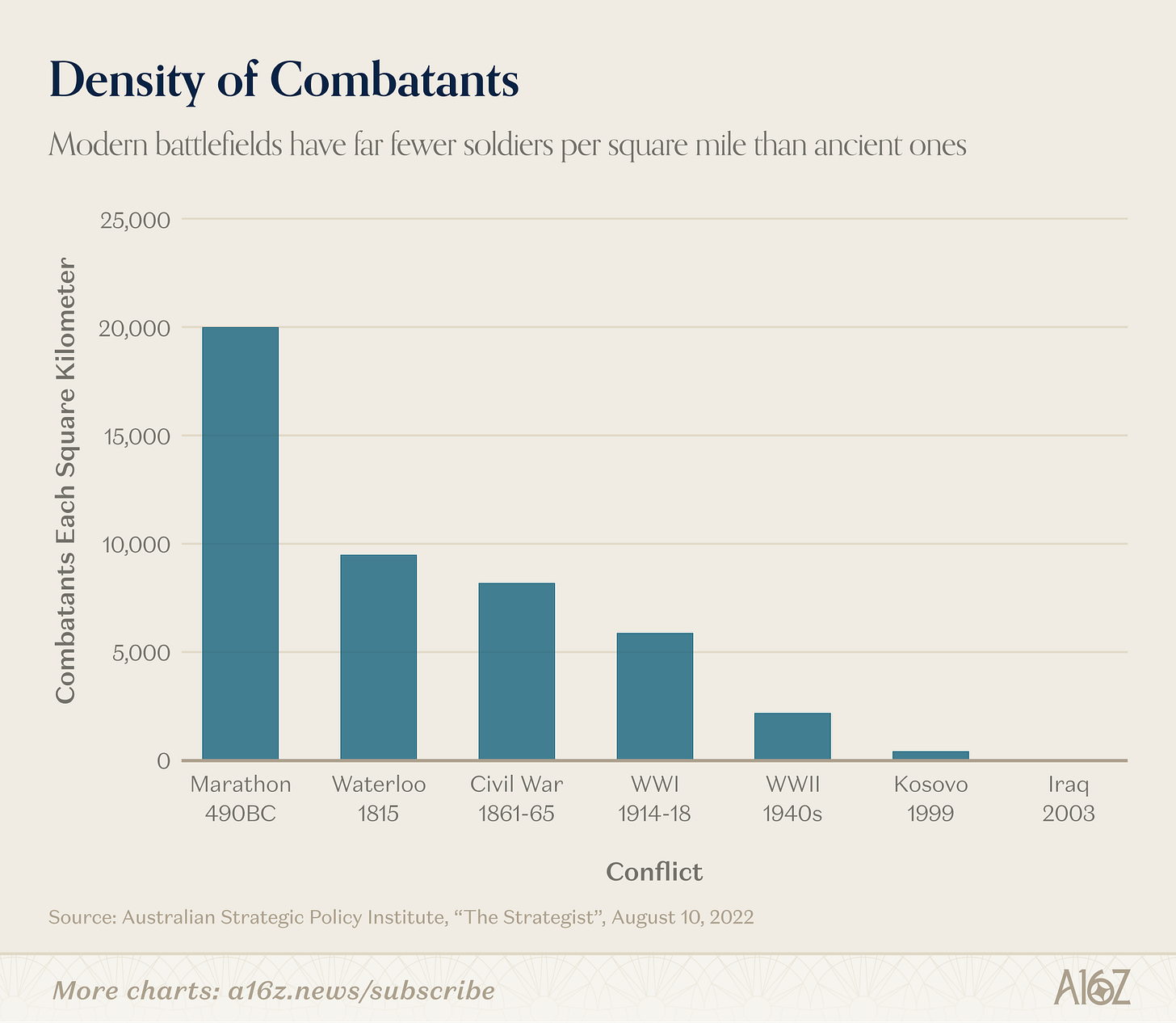

The entire history of warfare can be read as a single, accelerating motion: extending the standoff between weapon and target. The first man to pick up a stick made a two-foot standoff. The first to throw a rock made it a hundred. The American sharpshooter at Saratoga, firing accurate rounds from cover at three hundred yards, opened a gap British line infantry could not close. The British called it cowardly when they saw the standard panic response of an army watching its officers fall to a weapon it cannot answer. But Britain fielded its own riflemen within a generation. This is how it goes.

The same charge has followed every standoff weapon since. The airplane moved the standoff to hundreds of miles. The Italians used it for reconnaissance in 1911, and by 1941 carrier aviation let Japanese pilots fly 230 miles to Pearl Harbor and bomb a fleet whose faces they would never see. The bomber over Hiroshima killed roughly 80,000 people in a flash and ended the war in the Pacific without the million American casualties projected for an invasion of the home islands. An infantryman asked to do that work one body at a time could not have done it. The technology did the work the human could not. It also brought a generation of American servicemen home alive.

The nuclear bomb extended the standoff to civilizational scale and, by being too costly to use, anchored it there. The United States built the largest arsenal in human history and used exactly two warheads. Cold War deterrence did not eliminate war. Proxy fighting continued in Korea, Vietnam, Afghanistan, and dozens of smaller theaters. But it kept the great powers from direct confrontation for eighty years. The lesson, drawn correctly by the strategists who set policy in 1945 and after, is that capability prevents catastrophe more reliably than restraint without it.

The next iteration of that machinery is already here. Cheap, swarming, AI-targeted autonomous systems will dominate the battlefield of the next century the way airpower dominated the last. Ukraine and Russia have been fighting an autonomous war for three years, both sides building, fielding, and adapting in real time. The first-person-view (FPV) drone costs $400 to $500 per unit, roughly the same as a single 60mm mortar round. For the price of an unguided munition, the modern infantry unit now fields a precision-guided one.

Operation Spiderweb, June 1, 2025, was the proof of concept at strategic scale. One hundred and seventeen FPV drones, concealed in wooden sheds bolted to the backs of trucks, driven across Russia by civilian drivers who didn’t know what they were carrying, launched remotely from inside Ukraine, guided by AI trained on museum models of Soviet aircraft. Forty-one strategic bombers destroyed or damaged. An estimated $7 billion in losses for an operation that cost in the thousands of dollars. No Ukrainian operator was within a thousand miles of the kill zone. No Ukrainian casualties.

In contrast, Russia fed infantry into Avdiivka in human waves, at casualty ratios that would end any American commander’s career, absorbed as acceptable cost because the underlying obligations are simply different. A military that treats soldiers as mass does not face the engineering problem of how to extract a casualty from a drone-saturated environment. It never asks that question, therefore it never builds that answer. The nation that goes back for its people just builds different machines than the nation that does not.

The Usual Concerns

Some make a serious case that autonomy is structurally different from prior leaps in standoff, and that the differences make it war-promoting rather than war-deterring. Three versions of that claim deserve direct rebuttal.

The first holds that autonomy lowers the threshold for democratic leaders to authorize force by removing the political cost of casualties: the flag-draped coffins coming home to Dover. The argument gets the moral framing wrong. We have never said Kevlar encourages war, or armor encourages war, or that the medevac helicopter encourages war. America must defend our interests. Casualties in that pursuit are a tragedy to be minimized. Refusing to minimize them, in the name of preserving political restraint, is not a coherent moral position.

The second holds that AI removes the psychological resistance to killing. The idea traces to S.L.A. Marshall, a World War II combat historian who claimed that most soldiers, as many as 85%, never fired their weapons in battle, held back not by fear but by a deep human resistance to killing another person. Dave Grossman later built on that finding in On Killing, arguing that modern military training works precisely by conditioning soldiers to overcome that resistance. The concern, then, is that autonomous weapons do the same thing, permanently and at scale. But the argument conflates two different resistances. Marshall’s research, contested as it is, was about the soldier on the trigger, the rifleman who hesitates at close range. The decision to go to war is made by commanders and political leaders who have never been on the trigger and have never carried that resistance. Lincoln sent Sherman through Georgia. Truman authorized Hiroshima. The wall Grossman names is the wall the trigger-puller hits. It has never been the wall that restrained the order to fire. Removing it changes who pulls triggers. It does not change who orders force.

The third holds that a nation with mature autonomous capability, facing one without, has every incentive to use the asymmetric window before it closes. The historical record contradicts this. The United States held a unilateral nuclear monopoly from 1945 to 1949 and used the weapon zero additional times. Asymmetric capability has rarely produced inevitable use. What it produces is leverage, exercised through signaling and credible threat rather than detonation. The more pressing question is what happens when an adversary holds asymmetry over us. Building decisive autonomous capability is how we ensure no adversary holds that asymmetry, in the same way the nuclear arsenal ensured no adversary held nuclear asymmetry over us. The argument for building autonomy parallels the argument that has held the peace under nuclear arms for eighty years.

The moral argument for autonomy eclipses any of these weak arguments against it. In a democracy, the state asks young men and women to put their bodies between the country and its enemies. The state makes that request under a covenant: it will send them only when necessary, and when it does send them, it will give them every advantage available to bring them home. The covenant binds the elected leadership of a democracy more deeply than any other form of government. A democracy that refuses to develop technology capable of substituting machines for human soldiers in lethal roles, when that technology is available, is breaking that contract. It is choosing to send a 19-year-old to die in a place where a machine could have completed the mission.

Not just a weapon

Autonomy, however, means more than lethality. That framing, dominant in the public debate, misses the full extent of what the technology enables and why it matters.

The engineering stack that makes Spiderweb possible—autonomous navigation, sensor fusion, real-time decision-making in denied and contested environments—is the same stack required to put a machine into a hot zone to extract a wounded soldier. Not a related technology. The same technology, expressed in a different mission. Where autonomy is most visibly transforming standoff in its offensive dimension, its application as a life-saving tool is older, less discussed, and in many respects more foundational. That application did not begin with the current war. It predates it. And as automated lethality on the battlefield increases—as FPV drones make open-ground movement a death sentence—the imperative for autonomous capabilities grows in direct proportion to the threat: Casualty evacuations, logistics & resupply, reconnaissance of chemical or radiologically contaminated areas, disposal of explosive ordnance—the dull, dirty and dangerous work better performed by machine than human.

Long before the current era of drone warfare, explosive ordnance disposal units were fielding unmanned robotic systems for the same reason that animates the entire history of standoff: missions deemed too dangerous for human intervention. The calculus was unchanged. What has changed is the capability of the machine and the range of missions it can perform.

In the Bakhmut and Avdiivka corridors, vehicle exposure time in the open during daylight was measured in seconds before FPV strike. Conventional casualty extraction became effectively impossible in certain pockets of the line. Ukrainian units improvised: agricultural drones fitted with stretcher frames, and remote-controlled ground vehicles used for resupply and the recovery of human remains along routes that would otherwise kill any driver who attempted them.

Before, the alternative to these improvised autonomous solutions was not a better conventional solution. It was leaving people behind.

A case study in the Pacific

So then how does this look on the battlefield? Let’s use Operation Detachment, the U.S. invasion of Iwo Jima, February 19th, 1945, as a case study.

The Marines were tasked with seizing and securing Iwo Jima. Specifically: capture Airfield No. 1 (South Field), Airfield No. 2 (Central Field), and deny the island to Japanese forces permanently in order to eliminate Japanese early-warning radar and interceptor bases disrupting B-29 raids, establish P-51 escort fighter bases, and provide emergency landing fields for damaged bombers returning from Japan.

Ten days of pre-landing fires were requested but only three were approved, based on faulty intelligence. Using naval guns and B-24 and B-25 bombers, the Americans softened their target before landing 30,000 Marines from the 4th and 5th Divisions on February 19th, 1945. Over 36 days, more than 70,000 Marines fought at close quarters through volcanic terrain, tunnels, and fortified ridgelines. The mission was accomplished. 26,000 Americans were wounded or killed. The Japanese suffered over 21,000 killed.

Now take the same mission. The same objective. The same enemy, with the same tunnel network, the same 21,000 defenders, the same fortified ridgelines. But fight it with tomorrow’s technology.

The operation itself begins not on the beach, but weeks before anyone considers putting a Marine ashore. Persistent ISR drones, small and quiet, operating around the clock, feed a continuous stream of imagery and signals intelligence into the AI targeting system. Every tunnel entrance is located. Every hidden artillery position is identified by its acoustic signature, its heat profile, its pattern of use. The 18 kilometers of tunnel network that made Kuribayashi’s defense impenetrable in 1945 becomes a database. The AI platform simultaneously constructs and refines the strike plan, categorizing targets by type, depth, and interdependency, identifying which positions are command nodes, which are logistics hubs, and which are mutually supporting and must be struck together. It presents the commander with a prioritized, resourced, and sequenced strike plan rather than a target list. A target list tells you what to hit. A strike plan tells you what to hit first, with what, and in what order to produce the effect you need.

Pre-landing fires no longer need to be the blunt instrument of naval bombardment. At Iwo Jima in 1945, Marine commanders begged for ten days of shelling before the beach assault, but they got three, courtesy of Admiral Blandy. Even so, the limit of three days was never really the problem. Ships were firing at a Japanese tunnel network they couldn’t fully see, at defenders who simply went underground and waited out the shells. The real limiting factor was always the inability to find and fix targets faster than the enemy could adapt. An AI targeting system assigns munitions to individual positions and coordinates simultaneous engagement across the entire island. Not sequential. Not sector by sector. Everything at once, with each strike timed to prevent defenders from repositioning between impacts.

In 1945, American commanders believed the pre-landing bombardment had destroyed most of the Japanese defenders. But it had not, and Marines died for that mistake. The AI targeting system cannot make the same error. It requires confirmation of destruction before marking a target complete, and it knows the difference between a position that has been suppressed and one that has been destroyed. The hardest targets to confirm were the ones underground. But the tunnel network that made Iwo Jima so costly presents no special problem for autonomous systems. Where soldiers cannot go, micro-drones can. Systems small enough to navigate underground passages enter the tunnels directly, reaching defenders that no surface assault could touch.

And if any individual drone is shot down, the mission continues without interruption. There are no people on board to lose.

In this scenario, the beach landing—the moment that cost 5,300 Americans in the first 58 hours—does not happen. The Japanese built their entire defensive theory around one assumption: that the Americans must come ashore on the southeastern beaches. That assumption was correct in 1945. It is no longer correct. Without a beach landing, the volcanic ash terraces, the interlocking fields of fire, the months of tunnel construction oriented to cover the shoreline, all of it is a fortress that the attacker simply never has to enter.

The mission is accomplished. A small special operations element then moves to seize and secure the airfields. Not a division. Not 30,000 men across seven beach sectors. A team.

The island is denied to the enemy. The objective is identical. The human cost is not.

Kuribayashi was a brilliant commander who correctly identified the single point of American vulnerability and built his entire defense around exploiting it. Autonomous systems guided by AI don’t eliminate brilliant adversaries. They eliminate the vulnerability.

Peace Through Engineering

America does not wage war the way other nations wage war. That is not an accident. It is doctrine, built from values. We have always been willing to spend disproportionate resources to recover a single soldier, sailor, airman, or Marine, because we believe, at the level of national covenant, that the people we send to fight are not inputs to be optimized. They are citizens. And the state that sends them bears an obligation to bring them home. That belief has shaped our weapons, our tactics, our training, and our tolerance for operational cost in ways that distinguish American warfighting from our adversaries. Autonomous systems are not a departure from that tradition but its fullest expression.

For the first time in the history of warfare, the technology exists to honor that covenant completely, to accomplish the mission without sending the man. The United States must now field it at the quality and quantity that delivers a decisive advantage. Historically, the American defense establishment has struggled to achieve dominance on both of those two axes.

The first one is quality, the intelligence layer: sensor fusion, target discrimination, electronic warfare resilience, swarming behavior, on-board decision-making in degraded communications environments. This is the part of the problem the United States understands. Our AI talent pool, our frontier model labs, and our operational experience integrating advanced systems are durable advantages.

The second is quantity. Mass. The capacity to produce attritable platforms at the volume the modern battlefield actually consumes. This is the part the United States has done poorly for fifty years. Our procurement system rewards exquisite, high-cost platforms in small numbers. The F-35, by every measure of capability, is the finest fighter aircraft ever built. By every measure of cost-per-unit and units-fielded, it is a cautionary tale. China is not making the same mistake. Chinese drone production capacity, by open-source estimates, exceeds the entire NATO production capacity by an order of magnitude.

Both axes are required. Quality without quantity gives you boutique capability: beautiful systems too rare to use. Quantity without quality gives you dumb mass, attrited at the same cost ratios that destroyed Russian armor in Ukraine. The United States needs both, and the procurement system has to be restructured to deliver both. That means treating attritable autonomous platforms as a different procurement category from crewed aircraft and surface combatants. They are. They should be procured, fielded, and replaced like ammunition, not like aircraft carriers—expendable, not durable items. We have the talent advantage, but we are losing the production race.

The strategic logic for autonomy is the strategic logic the United States has faced in every major technology race of the last century. We did not build the atomic bomb because we wanted to use it. We built it because the alternative was a Reich or a USSR that built it first and used it without our restraint. The same logic applies, in sharper form, to autonomous warfare. China is racing toward decisive autonomous capability. The Chinese state operates without American legal constraints, without American norms about civilian casualties, and without American restraint about authoritarian uses of surveillance and force. A future in which the People’s Liberation Army has decisive autonomous superiority over Taiwan, the South China Sea, or American forces in the Pacific is a future in which the rules of force projection are written by Beijing.

If China moves on Taiwan, the form will not be a 1944-style amphibious assault. It will be cyber, information operations, autonomous logistics interdiction, and drone swarms over Taiwanese command nodes: the full toolkit, applied at strategic scale, designed to break Taiwanese will before any visible kinetic engagement begins. The only thing that prevents that future is a credible American autonomous capability that makes the Beijing calculation come out the other way. Recent American operations against Iranian and Venezuelan targets demonstrated autonomy at strategic effect. Beijing’s silence in response was calculation, not indifference. The window in which we hold decisive advantage is the window in which we deter. We must not waste it.

The standoff that started with a stick has reached its current form. There will be further milestones; autonomy is not the last. What will not change is the obligation: the obligation of the state to its citizens, the obligation of the leadership to the men and women it might one day send into harm’s way. We owe them our best engineering, our largest arsenal of attritable systems, and our most capable AI. We owe them Peace through Strength written into the budget, not just into the speech. The technology that keeps them home is a moral asset, not a moral hazard.

This newsletter is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. Furthermore, this content is not investment advice, nor is it intended for use by any investors or prospective investors in any a16z funds. This newsletter may link to other websites or contain other information obtained from third-party sources - a16z has not independently verified nor makes any representations about the current or enduring accuracy of such information. If this content includes third-party advertisements, a16z has not reviewed such advertisements and does not endorse any advertising content or related companies contained therein. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by a16z; visit https://a16z.com/investment-list/ for a full list of investments. Other important information can be found at a16z.com/disclosures. You’re receiving this newsletter since you opted in earlier; if you would like to opt out of future newsletters you may unsubscribe immediately.

What’s wrong with your mind? You guys wake up in the morning think the first thing is always war.

Great article.

Your conclusion "The technology that keeps them home is a moral asset, not a moral hazard".

Morality has nothing to do with war.

The phase is really: "The technology that keeps them home is a population motivation asset. The population in a democracy and even in Russia, is more motivated to support the war if their sons and daughters are not in danger"