The Trust Wall: Why AI’s Next Billion Users Will Come Through Trust Networks

Lessons from YouTube’s Global Expansion

America | Tech | Opinion | Culture | Charts

Sakina Arsiwala is a product and growth leader and former Twitch executive who helped scale Google, Youtube, and Nextdoor internationally. She is currently an a16z New Media fellow.

The YouTube Lesson: Content is a Geopolitical Weapon

Years ago, I PM’ed Google’International Search and then led YouTube’s international expansion, launching in 21 countries in just 14 months. I wasn’t just localizing a product; I was building local content partnerships and navigating the GTM minefields of legal, policy, and access. Most recently, I managed Community Health (Trust & Safety) at Twitch. Along the way, I founded two startups.

Today’s AI landscape feels eerily prescient of that early Google & YouTube growth. My career has taught me one truth: Globalization is not a product feature; it is a geopolitical negotiation. The hardest lesson was that distribution was never purely technical. Growth depended on local partners, cultural translators, and trusted community figures who bridged the gap between a global platform and a local audience.

I’ve lived through the GEMA blackouts in Germany, where a music rights agency essentially opted a whole nation out of the pan-EU YouTube rollout. I’ve lived through lèse-majesté arrest warrants in Thailand, where I couldn’t fly through the country because, as the face of YouTube, I risked arrest for content deemed defamatory to the King. I saw Pakistan bring down the entire internet to block a single video. I remember when our offices in India were physically attacked because a global algorithm collided with local sanctity.

What we were really navigating wasn’t just policy or infrastructure. It was trust friction.

In every market, someone had to absorb the cost of figuring out what was safe, acceptable, and valuable before users would engage. That cost compounds. And over time, it becomes a kind of Trust Tax, paid upfront by a small group, and amortized across everyone else.

Those same fault lines are now reappearing in AI—only now they are harder, faster, and more visible. A recent impasse between the U.S. federal government and Anthropic has triggered public debate, while OpenAI faces increasing scrutiny over its public-sector partnerships. We are witnessing a shift where user adoption is no longer just about utility—it is increasingly influenced by ideology. In this climate, trust is fragile; a single perceived erosion can trigger a rapid, mass migration of users.

While Google is doubling down on its embedded trust strategy, leveraging the familiarity of its existing ecosystem of Workspace and Search to bridge the gap, the global landscape is fraying. The EU’s stringent guardrails, China’s frantic development race, and the rising tide of AI Nationalism have put the world on alert.

The lesson for 2026 is clear: Institutional trust and cultural permission are now inseparable from the product itself. You cannot build an Intelligence OS without a bedrock of Trust.

This is the Sovereign Wall—the structural boundary where global intelligence collides with local control. But from a product perspective, it manifests as something more immediate: the Trust Wall.

Every global AI system now expands until it collides with it—the point where adoption is no longer determined by capability, but by whether users, institutions, and governments trust it within their own context.

The internet was borderless. Intelligence will not be.

The Sunset of the Explorer Era

The first billion AI users were explorers and tech-optimists. But the Explorer era is over. For the last three years, we have lived in a time of prompt-craft and digital alchemy, visiting destination apps like ChatGPT or Claude as if they were digital shrines to witness the miracle of generation. In this era, the only metric that mattered was Model Parity: Who topped the latest benchmark? Who has the most parameters?

But as we cross into 2026, the campfire of the Explorer era is flickering out. We are no longer building toys for the curious; we are pivoting toward Intelligence Operating Systems,the invisible, ubiquitous pipes that must power the life of a solo-entrepreneur in São Paulo or a community health worker in Jakarta.

These users aren’t Explorers. They are Utility-Seekers. They don’t want to chat with a ghost in the machine; they want a tool that navigates the friction of their reality. This is the real Crossing the Chasm in the quest for the next billion users. And it is here, at the edge of the map, where the Silicon Valley Global API dream crashes into the hardest reality of our time: The Sovereign Wall.

The critical shift is this: AI adoption is no longer primarily a model problem. It is a distribution and trust problem. Frontier labs will continue to push capability forward, but the next billion users will not arrive because a model scores higher on benchmarks. They will arrive because the intelligence layer reaches them through institutions, creators, and communities they already trust.

2026 Reality: AI as a National Infrastructure Problem

In 2026, the challenge is no longer about making the model smarter; it is about making the model permitted. The Sovereign Wall is the point where General Intelligence meets National Identity. Across the world we are already seeing the early contours of this wall: data localization requirements, national AI compute programs, and government-backed model initiatives from India to the UAE to Europe. What began as cloud infrastructure policy is quickly evolving into intelligence policy. It is where a country refuses to be a Data Colony and demands that the intelligence powering its citizens lives within its own Sovereign Vaults, speaks its own lore, and respects its own borders.

When you see the CEOs of Google (Sundar Pichai), OpenAI (Sam Altman), Anthropic (Dario Amodei), DeepMind (Demis Hassabis) on stage with Prime Minister Modi at the India AI Impact Summit 2026, you are seeing the Sovereign Wall in real-time. PM Modi’s M.A.N.A.V. Vision (Moral and Ethical Systems, Accountable Governance, National Sovereignty, Accessible AI, and Valid Systems) is a clear signal: if frontier labs attempt a Direct-to-Consumer land grab, they will be regulated into obsolescence. Trust is the only currency that bypasses these borders.

The Weak Network Effect Problem and Why It Forces a New Playbook

Unlike social platforms, where each additional user increases value for every other user, AI’s value is largely local. My thousandth prompt does not directly make the system more valuable for you. The data flywheel improves the model, but the user experience remains personal, not social. AI is personal. It can be emotional. But at its core, it is a utility.

This creates a structural problem: AI does not benefit from the kind of compounding social network effects that powered the last generation of platforms. Without a native social graph, the industry defaults to a high-burn loop, chasing early adopters, power users, and the tech elite. That strategy works in the Explorer Era. It does not scale to the next two billion users.

And more importantly, it breaks down at the Sovereign Wall. Because when network effects are weak, trust does not emerge organically, it must be imported.

The Shift: From Network Effects to Trust Effects

If AI cannot rely on social network effects to drive adoption, it must rely on something else: Trust networks. This is the critical shift:

From Customer Acquisition → to Intermediary Empowerment

YouTube scaled because it rode on existing human networks of trust. AI must do the same. Instead of trying to build direct relationships with billions of users, the winning strategy is to:

empower the people who already have those relationships

leverage the trust they’ve already earned

and distribute intelligence through those channels

Why This Matters

In a world shaped by the Sovereign Wall:

Distribution is constrained

Direct-to-consumer is fragile

Trust is local, not global

Without strong network effects, AI cannot brute-force its way to scale. It has to flow through trust. AI doesn’t have network effects. It has trust effects.

The Solution: The Intermediary Era

How did YouTube actually gain a foothold in international markets?

Not by being a better video player. Not by localizing UI strings. We won because we became the platform of choice for people who already had local trust. In every market, adoption didn’t start with YouTube. It started with Identity Anchors: people and communities who already held cultural authority:

A Bollywood fan page curating rare Shahrukh Khan clips for diaspora communities in Dubai

Anime superfans in the US building deep lore ecosystems that mainstream media didn’t serve

Local comedians, teachers, and remix artists translating global content into culturally legible formats

These creators weren’t just uploading videos. They were contextualizing the internet for their audience. They acted as Trust Brokers bridging the gap between a foreign platform and a local user. YouTube succeeded because it became the invisible infrastructure powering these Identity Anchors.

The Missed Insight: Direct-to-Consumer Hits the Sovereign Wall: Most AI companies today are still operating with a Direct-to-Consumer mindset:

Build a better model → expose it via chat → acquire users directly.

This works—until it doesn’t. Because in high friction markets, users don’t adopt technology directly. They adopt it through someone they trust.

YouTube didn’t scale globally by convincing billions of users one-by-one. It scaled by powering the people who had already earned the audience’s trust. That is what invisible infrastructure actually means:

=> You don’t own the relationship , => You power the relationship.

And at scale, that is far more defensible.

From Chat to Agents: Powering the Trust Brokers

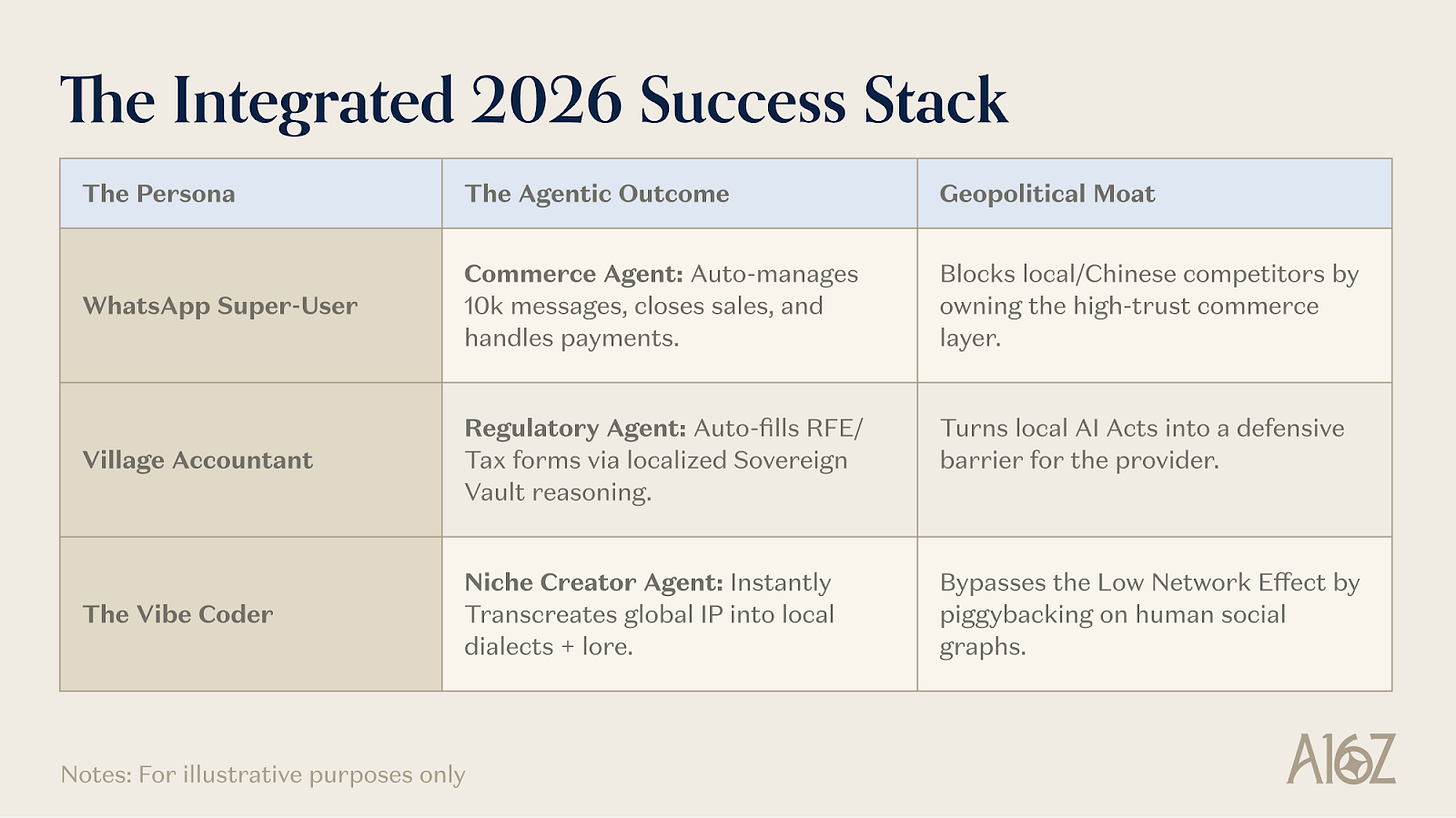

This is where the shift from chat → agents becomes critical. Chat is a tool for individuals. Agents are leverage for intermediaries. If we take Anthropic’s Ami Vora’s framing “build for the most tired person”, in many markets, that person is the Trust Translator:

the educator adapting foreign concepts

the entrepreneur navigating local bureaucracy

the community leader managing information overload

We win by solving their Trust Latency, the gap between global intelligence and local usability. That requires an Agentic Success Stack that actually does the work:

For the Educator:

Sora / GPT-5.2 transcreates curriculum—swapping an American football analogy for cricket, preserving meaning while aligning with cultureFor the Solo Entrepreneur:

An agent that doesn’t just explain a Singapore tax form, but completes and files it via local APIsFor the Community Leader:

Contextual memory layered onto WhatsApp—turning 10,000 messages into structured action, preserving signal and community norms

Why This Works: Solving Trust Latency at the Last Mile:

To understand why this model scales, you have to understand Trust Latency. In many parts of the world, the bottleneck is not access to technology. It’s the time, risk, and uncertainty required to trust it. Technology isn’t adopted through ads, it’s adopted through vouching.

The mistake most AI companies are making is trying to pay the Trust Tax centrally—through branding, distribution, or product polish. But trust doesn’t scale that way.

The fastest path is to outsource the Trust Tax to those who are already paying it—creators, educators, and operators embedded in local contexts. This is someone who has already tried and errored on behalf of their audience figuring out what works, what breaks, and what actually matters in a local context. They absorb that risk so their audience doesn’t have to.

By empowering these Trust Brokers:

CAC approaches zero: distribution rides on existing trust

LTV increases: utility is locally relevant, not generic

Adoption accelerates: trust is inherited, not earned from scratch

You effectively gain a global sales force you don’t have to pay for. One that is more credible, more efficient, and more deeply embedded than any centralized go-to-market motion. You are no longer building a product for users. You are building leverage for the people users already believe.

That is how YouTube scaled globally. And it is how AI will cross the Sovereign Wall.

2. Sovereign Vaults: The Geopolitical Moat

The endgame for Marc Andreessen’s Techno-Optimism isn’t fighting regulation; it’s productizing it. The race against China’s DeepSeek and Kimi isn’t won by ignoring borders; it’s won by owning the vault.

What is a Sovereign Vault? It is a residency-first, localized instance of a model that lives within a nation’s Digital Public Infrastructure (DPI).

The Geopolitical Moat: By giving nations like India or Brazil digital sovereignty over their models, weights, and data, we fundamentally shift the balance of control. Intelligence is no longer mediated by foreign platforms; it is governed within national boundaries. This doesn’t “block” foreign adversaries outright—but it raises the cost of influence, reduces external dependency, and limits the surface area for control, extraction, or unilateral intervention.

The Identity Anchor: By anchoring our models in local lore and legal reality, we build a moat that General Intelligence can’t touch.

The Feedback Loop: Solving for the hyper-local nuances of a Malaysian tax permit isn’t a distraction; it’s a Model Accelerator. It provides the Cultural Elasticity our base models need to remain the world’s most capable intelligence.

There is a real tension here. The promise of AI is universal intelligence, but sovereignty pushes the ecosystem toward fragmentation. If every nation builds its own stack, we risk a patchwork of incompatible systems, uneven safety standards, and duplicated effort. The challenge for frontier labs is not simply to scale intelligence, but to design architectures that allow local control without collapsing the benefits of global capability.

Three Structural Shifts in the Intermediary Era

1. AI Distribution Will Move into Existing Trust Networks

AI will not scale through standalone apps. It will embed into messaging platforms, creator workflows, education systems, and small business infrastructure—because trust is already established there. Without strong network effects, distribution must ride on existing human relationships.

2. National AI Infrastructure Will Become a Default Requirement

Governments will increasingly require local model hosting, sovereign compute, or regulatory oversight for critical AI systems, accelerating the emergence of sovereign vault architectures.

3. The Creator Economy Will Become an Agent Economy

Creators will not just produce content—they will deploy agents that perform real work for their communities. These agents will act as extensions of trusted individuals, inheriting their credibility and distributing intelligence through trust networks.

There is, of course, another possible future. A single dominant assistant could emerge. Deeply embedded in operating systems, browsers, and devices creating a direct relationship between user and model that bypasses intermediaries entirely. If that happens, the trust layer collapses into the assistant itself.

But history suggests something more pluralistic. Even the most dominant platforms—from mobile operating systems to social networks—ultimately grow through ecosystems. Intelligence may be universal, but trust remains local. Whichever architecture ultimately wins, the underlying challenge remains the same: AI adoption is no longer primarily a model problem. It is a distribution and trust problem.

Conclusion: Niche is the Only Real Global

The greatest fallacy of the Explorer Era was the belief that intelligence is a commodity—a single, global API that would manifest identically in a Manhattan boardroom and a village in Karnataka. The Sovereign Wall has exposed a harder truth: intelligence may be universal, but adoption is not.

Nations and local institutions don’t want a black-boxed external system. They want control, context, and the ability to shape intelligence within their own boundaries. They don’t want the application—they want the pipes. The infrastructure, the security, and the compute that empower their own citizens to build for themselves.

Growth in 2026 is no longer about discovering a universal UX. It is about product elasticity—the ability for intelligence to adapt to local context, regulation, and culture without losing capability. If we continue to chase the global consumer directly, we remain a foreign layer—fragile, replaceable, and subject to the same disruptions I saw at YouTube.

But when we pivot to Intermediary Empowerment, the model fundamentally changes. We move from chat to agency, from convincing users to empowering Trust Brokers, and from fighting regulation to turning it into a moat. AI doesn’t scale through models—it scales through trust.

The winner of the AI race will not be the company with the smartest model. It will be the company that is most effective at making the local hero—the teacher, the accountant, the community leader—ten times more powerful. Because in the end, intelligence travels through systems, but adoption travels through people.

This newsletter is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. Furthermore, this content is not investment advice, nor is it intended for use by any investors or prospective investors in any a16z funds. This newsletter may link to other websites or contain other information obtained from third-party sources - a16z has not independently verified nor makes any representations about the current or enduring accuracy of such information. If this content includes third-party advertisements, a16z has not reviewed such advertisements and does not endorse any advertising content or related companies contained therein. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by a16z; visit https://a16z.com/investment-list/ for a full list of investments. Other important information can be found at a16z.com/disclosures. You’re receiving this newsletter since you opted in earlier; if you would like to opt out of future newsletters you may unsubscribe immediately.

This maps to something I've seen firsthand in production. We run an AI extraction system for a consumer products business, and the adoption pattern was exactly trust-network shaped — not top-down rollout, not feature-driven.

The system went from 'interesting demo' to 'daily dependency' because one domain expert started using it, got good results, and told three colleagues. Those three told their teams. Within two months we had organic adoption across departments that no amount of training sessions had achieved.

The interesting wrinkle: the trust wasn't in the AI. It was in the person who vouched for it. When the original champion moved to a different project, adoption in her old team actually dipped until someone else stepped into that trust node role.

The billion-user question isn't 'how good is the model' — it's 'who's the person in each trust network that makes the introduction.' That's the GTM challenge this piece gets right.

Dealing with this now, people problems. Trust. Educating. Distribution.