The Evidence Gap: Why Courts Can't Balance State AI Regulation

The dormant Commerce Clause’s evidence problem–and how to fix it

America | Tech | Opinion | Culture | Charts

One of the big narrative arcs we’re following closely at a16z is what happens with AI legislation in Washington DC. Specifically, the big debate right now is: to what degree are individual states allowed to regulate AI? Is it protected by the “Interstate Commerce” clause?

In this piece, the a16z Policy team goes deep on a critical problem: our judicial process requires a ‘cost-benefit analysis’ to assist these decisions. But it’s very hard for a judge to actually DO this kind of analysis! This piece from Jai and Matt will get you up to speed on how challenging this issue is for our court system, and how pressing it is for us to solve.

The dormant Commerce Clause places a constitutional limit on states’ authority to enact laws that burden out-of-state commerce. When a state law is challenged, courts must weigh its costs against its benefits. This cost-benefit analysis is critical for Little Tech: a patchwork of state laws with disproportionate out-of-state costs will make it harder for startups to compete against deep-pocketed platforms that are better positioned to navigate a complex regulatory landscape.

The problem is that judges almost never have the data to do this well.

The doctrine demands cost-benefit analysis; the record rarely supplies it. And if judges are ill-equipped to determine whether state AI laws violate the dormant Commerce Clause, judicial review will be less accurate: unconstitutional laws with high compliance costs may survive, making it harder for Little Tech to compete, while laws that serve legitimate state interests and impose minimal costs on startups and other firms may be struck down for lack of an evidentiary record to support them.

The possibility of a dormant Commerce Clause challenge to a state AI law is no longer hypothetical: last week, xAI filed a federal lawsuit challenging Colorado’s AI Act, in part on dormant Commerce Clause grounds.

Policymakers should enact laws and implement regulations that give judges better tools for conducting the cost-benefit analysis that dormant Commerce Clause adjudication requires. These reforms should (1) build a better evidentiary record on the burdens, benefits, and alternatives associated with state AI laws, and (2) equip judges with the analytical tools to interpret and apply that evidence.

The dormant Commerce Clause’s evidence problem

The Constitution grants Congress the power to regulate interstate commerce. Courts have long read this grant as implicitly limiting states’ ability to pass laws that interfere with the flow of commerce across state lines—a restriction known as the dormant Commerce Clause.

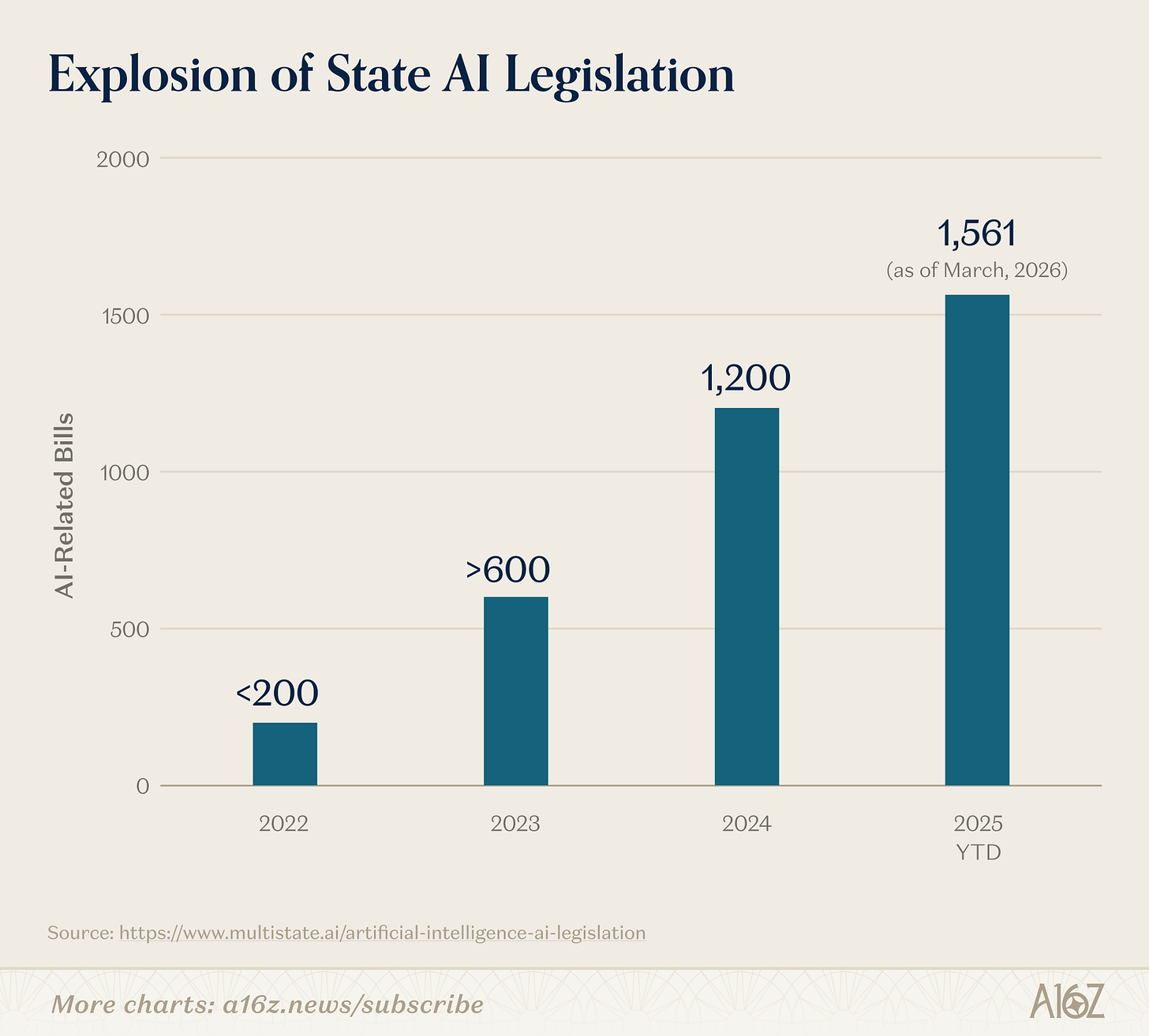

These limits are increasingly significant in AI governance, where states are legislating at an extraordinary pace. In 2025, more than 1,000 state-level AI bills were introduced across all 50 states. In 2026, the pace has accelerated: as of March, lawmakers in 45 states had already introduced over 1,500 AI-related bills, surpassing the total for all of 2025. With states driving AI governance in the U.S., the constitutional limits on their authority will shape the regulatory landscape.

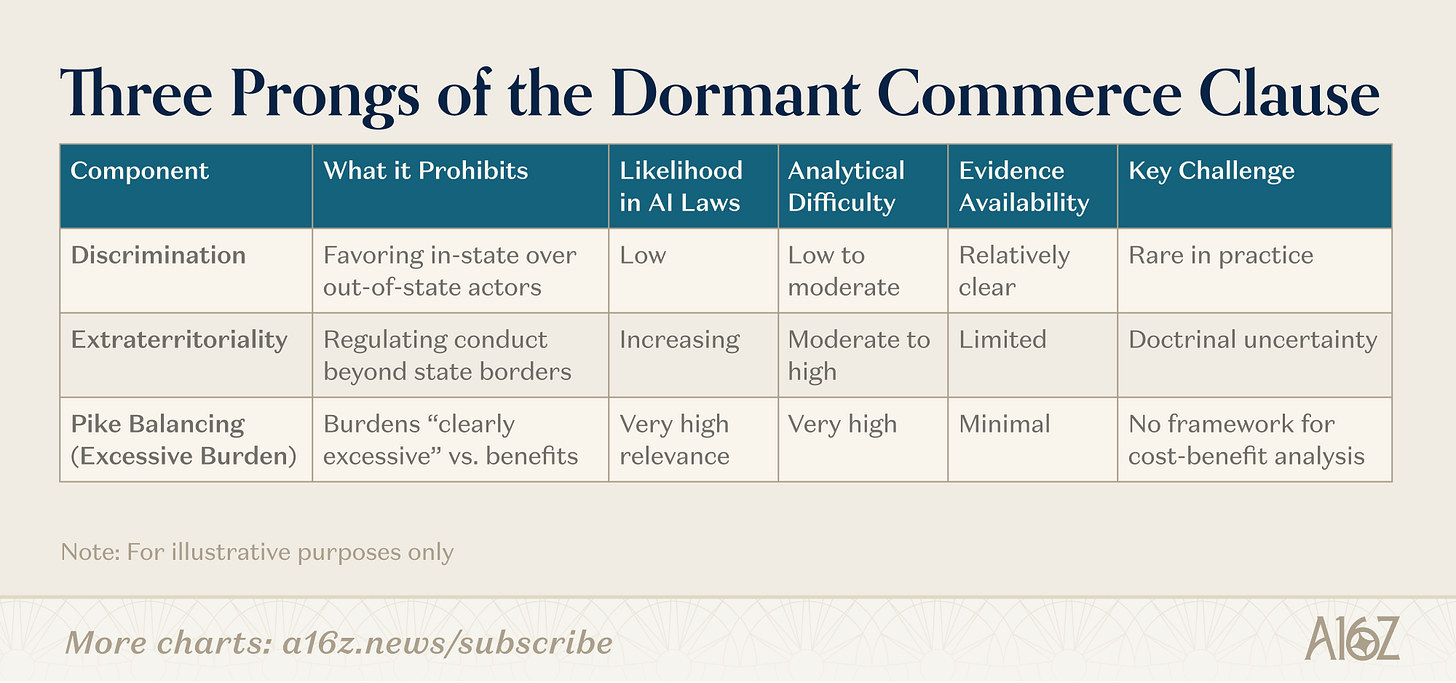

The dormant Commerce Clause has three components.

An anti-discrimination principle bars states from favoring in-state interests over out-of-state ones.

An anti-extraterritoriality principle bars states from regulating conduct that occurs beyond their borders.

And an anti-excessive burden principle bars states from imposing burdens on interstate commerce that are clearly excessive relative to the law’s local benefits.

Of these, discrimination is the most constitutionally suspect, but the least likely to appear in state AI legislation. States rarely design AI laws to treat out-of-state providers worse than in-state ones.

Extraterritoriality is a more pressing concern.

As several scholars and tech policy experts have described, it is increasingly routine for a state to regulate conduct occurring entirely outside its borders—for example, a disclosure law in state A that burdens open source developers in state B, regardless of whether they specifically intend to offer their products in state A. The role of extraterritoriality in dormant Commerce Clause analysis remains unsettled, and how judges understand AI technology and business operations across state lines may shape how they apply this concept in future cases.

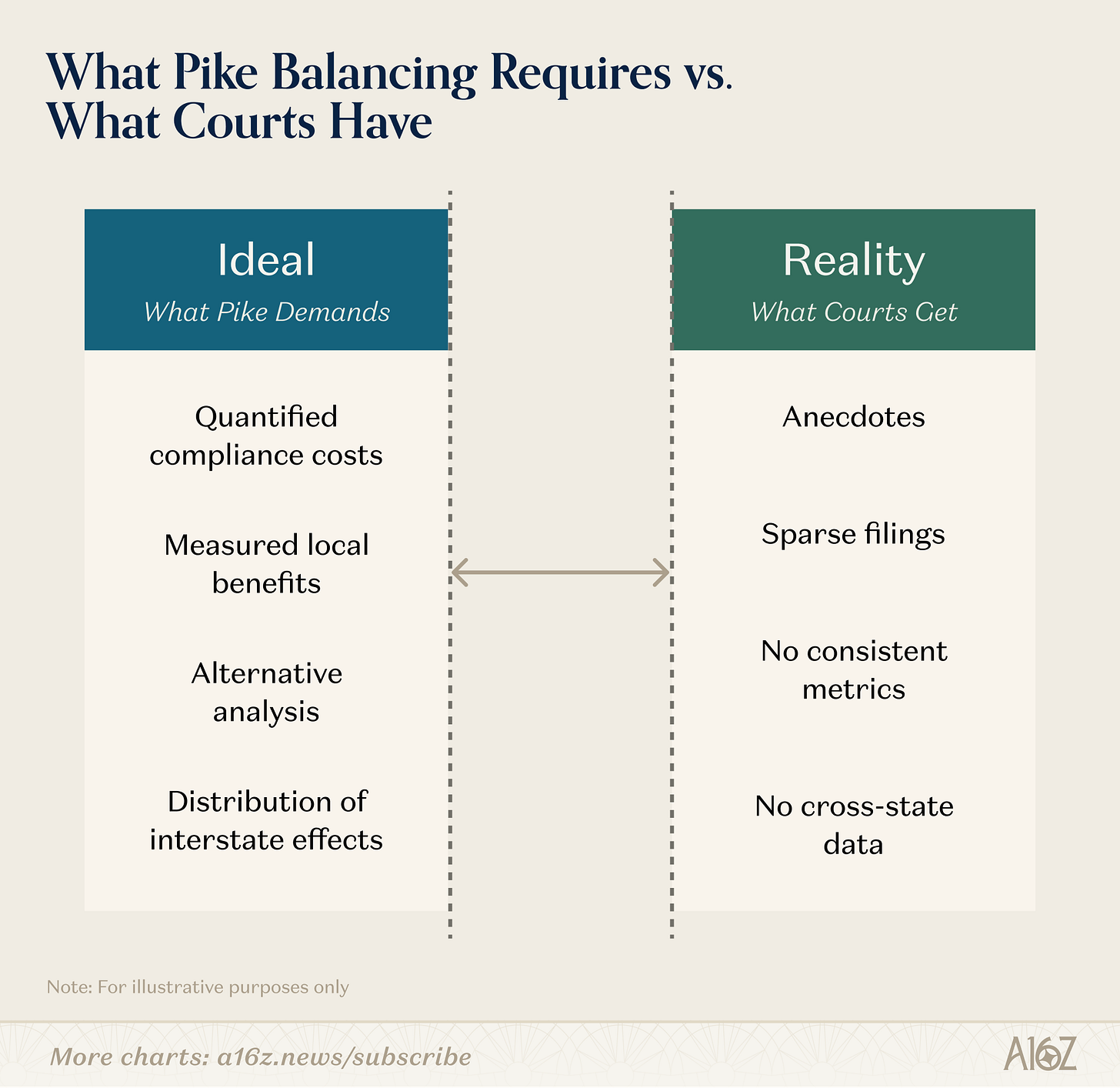

But the hardest analytical challenge lies in the third prong: the anti-excessive burden principle, known as Pike balancing after the 1970 case Pike v. Bruce Church, Inc.

This doctrine requires courts to determine whether a law’s burden on interstate commerce is “clearly excessive in relation to the putative local benefits.” That is a cost-benefit test, and judges have almost nothing to work with. Under Pike, judges are asked to weigh costs against benefits, but the record before them rarely contains systematic data on either side.

Pike itself embodied the challenge. The Court called the state’s requirement a “straightjacket,” declared the state’s regulatory interest “minimal,” and moved on. It offered no methodology for quantifying burdens, measuring benefits, or comparing the two.

The methodological challenge is complicated by the lack of clarity on the metrics: because the test is “clearly excessive,” a law might not fail Pike balancing simply because it imposes $2 in costs relative to $1 benefits, but would $3 in costs be dispositive? What about $4? And what about cases of costs and benefits that are apples and oranges, like economic fragmentation and cybersecurity?

Despite these open questions, subsequent dormant Commerce Clause cases have done little better. The result is a doctrine that hinges on empirical assessments but provides no framework for making them. As two legal scholars have argued, “cost-benefit analysis under Pike is much more demanding than current judicial practice contemplates, and it cannot be done by federal courts with any rigor absent a sea change in the way they assess DCC [dormant commerce clause] problems.”

As two legal scholars have argued, “cost-benefit analysis under Pike is much more demanding than current judicial practice contemplates, and it cannot be done by federal courts with any rigor absent a sea change in the way they assess DCC [dormant commerce clause] problems.”

Fortunately, policymakers can help fill this evidentiary gap.

For example, the White House’s “Ensuring a National Policy Framework for Artificial Intelligence“ Executive Order (the “EO”) tasked the Commerce Department with identifying burdensome state laws, which will necessarily involve an assessment of burdens. The EO also created a Justice Department task force to challenge state laws that may violate the dormant Commerce Clause, which demands analysis of extraterritorial costs and in-state benefits. Executive branch officials will likely develop data and analytical tools to do this work, and may get some of that information into judges’ hands. Such evidence gathering could, of course, also be used to develop the evidentiary record in support of well-tailored laws.

But in the long run, more will be needed: to review dormant Commerce Clause challenges, judges will need both access to evidence and the tools to interpret and evaluate it.

Policymakers can help on both fronts. Both the executive and legislative branches can institutionalize practices that produce the data and analysis the judicial branch needs. The proposals below are organized in two parts:

first, generating better evidence about the costs and benefits of state AI laws; and

second, helping courts interpret and apply that evidence.

I. Generate better evidence

Pike balancing demands cost-benefit analysis, but cost-benefit analysis is not without controversy.

Critics have argued that it cannot meaningfully quantify values like human life, health, and environmental protection—that some benefits are “priceless.” But there is extensive literature and decades of government practice demonstrating just how cost-benefit analysis can work in practice, even in areas that seem to resist quantification. The federal Office of Information and Regulatory Affairs (OIRA) has conducted cost-benefit analysis of federal regulations across every presidential administration since the Reagan administration, and scholars have documented both how the practice works and how it can be improved.

This post does not attempt to resolve the longstanding debate over the merits and limits of cost-benefit analysis. Its goal is more practical: if Pike balancing requires judges to identify costs and benefits and weigh them against each other, how can policymakers help to generate the kind of evidence that would allow courts to do so with better data than they currently have?

Policymakers can address this challenge by designing laws to produce evidence as a built-in feature of the legislative process, investing in ongoing measurement and reporting, and using experimentation to test regulatory approaches before locking them in.

A. Design laws to produce evidence

The simplest fix is for the legislative process itself to generate the data courts will later need.

Pre-enactment evidentiary statements

Every state AI law should include a standardized evidentiary statement in the bill file or administrative record, covering three categories:

a burden estimate (compliance costs, operational disruption, price effects, supply-chain impacts, and anticipated effects on product quality or user welfare, with ranges and assumptions disclosed);

benefit evidence (the harm being addressed, its baseline incidence, the expected effect size, and the degree of uncertainty); and

an alternatives analysis (less-burdensome options considered and reasons they were rejected).

Incorporating them into the policy process would discipline the legislative process by forcing lawmakers to articulate a law’s anticipated effects, and they would create a contemporaneous record for courts to draw on in subsequent challenges.

The goal of these statements is to ensure the policymakers and institutions who are best positioned to articulate a law’s benefits and costs to do so contemporaneously, as they are preparing legislation for introduction and shepherding it through the process. This process should be designed with that objective in mind, and avoid becoming a barrier to legislating. The statements should identify attributes about a bill, not add friction to the already substantial challenge of enacting a law.

In the long run, these types of evidence-generating mechanisms may actually give laws more staying power, since many areas of legal review—including the First Amendment—require a showing of a legislature’s purpose in enacting a law and its consideration of relevant alternatives. In some cases, creating this record might make it harder for laws to survive judicial review, while in others it might raise the bar for contesting a law’s value and make it more resistant to legal challenge.

Post-enactment review

State AI laws should mandate periodic review, with reports at six- to twelve-month intervals on whether projected costs and benefits have materialized.

To ensure credibility, reviews should be conducted by a government office like OIRA, which might then contract with academics, think tanks, or other third parties to assist with the assessment. The review should incorporate input from a range of stakeholders. Post-enactment data is especially valuable in Pike balancing, where the question is whether burdens are “clearly excessive” relative to benefits. Projections are useful; actual outcomes are better.

Compliance cost disclosures.

Any disclosure mandate in a state AI law should include a voluntary option for regulated entities to report their compliance costs, using quantitative or qualitative metrics. This creates a bottom-up evidence stream from the parties most directly affected by regulation, supplementing top-down legislative estimates.

B. Create better data about regulatory effects

Beyond the legislative process, policymakers should invest in ongoing data collection by states, the federal government, companies, and researchers.

States

State departments of commerce should publish baseline data on their AI industries—such as the number of companies, investment volumes, market concentration—with comparative analysis over time.

State attorneys general should publish biannual audits covering two sides of the ledger: the benefits of their own state’s AI laws (including for enforcement of consumer protection and civil rights laws) and the costs that other states’ laws impose on their consumers and economy.

This two-sided approach would give courts a more complete picture of how regulatory effects are distributed across state lines.

The federal government

OIRA should conduct cost-benefit analyses of state laws that the Attorney General or Commerce Secretary identifies as potentially imposing excessive burdens on interstate commerce, and make those analyses public. OIRA could review both proposed and enacted laws, providing courts with a rigorous analytical baseline.

Separately, NTIA and NIST should publish an annual study reviewing state AI laws in aggregate—quantifying costs and benefits and recommending how states can maximize local benefits relative to out-of-state burdens. Where appropriate, NIST could incorporate OIRA’s law-specific analyses.

Companies

Firms are often the best source of data on actual compliance costs, but may fear regulatory reprisal.

The Commerce Department should establish a voluntary, anonymous reporting mechanism—ideally through an anonymized web portal—with structured reporting fields covering the states in which affected production occurs, the geographic distribution of compliance costs, whether the law shifts costs out of state, and qualitative effects on product quality or user welfare. Commerce could aggregate that data and publish it in regular reports, giving courts industry-sourced evidence without exposing individual companies.

Researchers

State and federal governments should fund economic research evaluating the out-of-state costs and in-state benefits of state AI laws.

Mechanisms could include dedicated grant programs at NSF or NIST for research on the interstate economic effects of AI regulation, data-sharing agreements that give academic researchers access to the compliance data collected through the Commerce Department’s anonymous reporting mechanism, and funded partnerships with university research centers specializing in regulatory economics, technology policy, or law and economics.

C. Use experimentation to generate real-world evidence

When the effects of a regulatory approach are genuinely uncertain, the best way to generate evidence is to test it.

Pilot programs allow policymakers to observe how regulation works in practice before scaling it—and they produce exactly the kind of empirical record that Pike balancing demands.

Several models are available. Regulatory sandboxes permit firms to operate under modified rules for a defined period, generating data on how specific regulatory requirements affect compliance costs, innovation, and market behavior. Policy experiments allow states to test alternative regulatory designs in parallel, comparing outcomes across different approaches and producing direct evidence of which designs achieve local benefits at lower cost to interstate commerce. AI opportunity zones, as proposed by the Civitas Institute, would designate geographic areas for accelerated AI deployment under tailored regulatory conditions, generating evidence about the relationship between regulatory burden and economic growth.

These mechanisms share a key advantage over conventional regulation: they are designed to be revised. Because they operate on a limited scale and for defined periods, they allow policymakers to gather evidence and adjust course before a regulatory approach is locked into statute—and before courts are asked to evaluate a law whose effects are speculative. They also give courts something they rarely have in Pike balancing: a factual record drawn from observed outcomes rather than assumptions.

To realize this advantage, every pilot program should include a mandatory reporting requirement, with results published at six- to twelve-month intervals covering effects on compliance costs, innovation, consumer outcomes, and interstate economic activity. These reports should be designed with judicial use in mind—structured to address the kinds of questions Pike balancing raises, including how burdens distribute across state lines and whether less-restrictive alternatives achieved comparable benefits.

II. Help courts use evidence

Better evidence is necessary but not sufficient. Pike balancing requires judges to compare qualitatively different kinds of burdens and benefits—economic costs against consumer safety gains, innovation effects against privacy protections—and the doctrine gives them almost no guidance on how.

The proposals in this section focus on getting evidence into the courtroom and building judges’ capacity to work with it.

A. Improve how evidence reaches courts

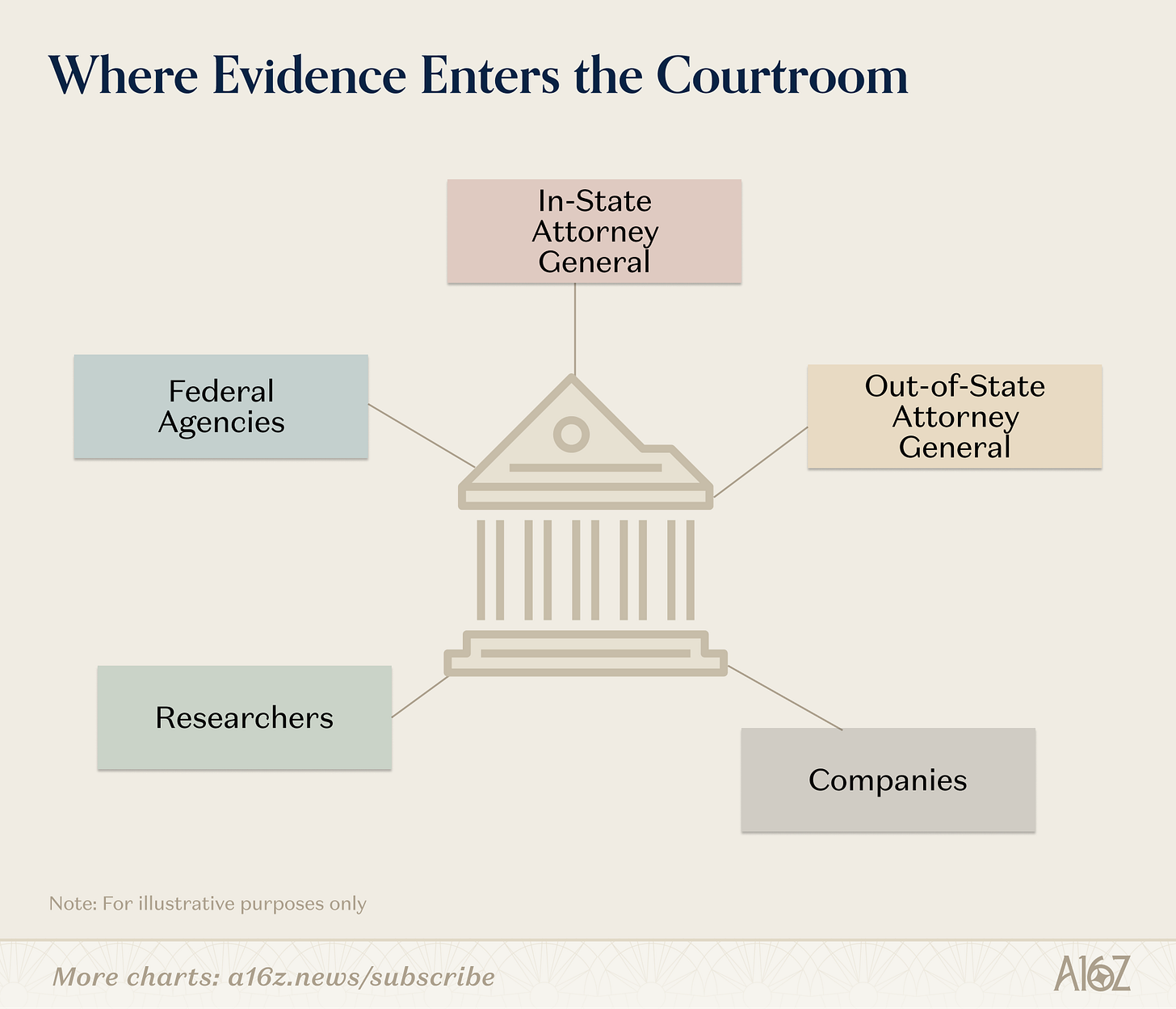

Even robust evidence is useless if it never enters the judicial record. Federal agencies and state attorneys general should make systematic use of statements of interest to put evidence before courts in dormant Commerce Clause cases.

Federal statements of interest

The federal government should file statements of interest that synthesize evidence from the mechanisms described above: OIRA cost-benefit analyses of the specific law at issue, NTIA/NIST assessments of aggregate state AI law effects, and summaries of company-reported compliance data from the Commerce Department. These filings would give courts a consolidated, expert-informed evidentiary package that no single litigant could assemble.

In-state attorney general statements

The attorney general of the state that enacted the challenged law should file statements of interest documenting the law’s local benefits, drawing on the biannual audits described above. This ensures the court has a full account of the regulatory interests the law serves.

Out-of-state attorney general statements

Attorneys general from other states should file statements of interest on spillover costs—the challenged law’s effects on their consumers, economies, and law enforcement capabilities. These filings would give courts a direct account of the interstate burdens that Pike balancing is designed to measure, which might otherwise go unrepresented.

B. Build judicial capacity for cost-benefit analysis

Pike balancing is an analytical exercise, but most judges have limited training in economics or quantitative methods, and the doctrine itself provides almost no methodological guidance. Policymakers and legal institutions should invest in changing that.

Judicial education

Judicial conferences and judicial training programs should include credible, balanced, and institutionally grounded sessions led by economists, social scientists, and practitioners covering judicial methodologies for cost-benefit analysis, techniques for incorporating qualitative factors—such as product quality, user welfare, social welfare—into a cost-benefit framework, and the specific economics of AI regulation. They should also give judges tools for weighing costs and benefits: what types of costs and level of costs might be “clearly excessive” in relation to local benefits? The goal is to give judges a practical toolkit, not to turn them into economists.

Technical guidance

The American Bar Association, Federal Judicial Center, and similar organizations should publish guidance for judges on evaluating the costs and benefits of AI laws, addressing methodological questions like how to handle uncertainty, discount future effects, and compare qualitative and quantitative factors, as well as substantive questions specific to AI.

Additionally, courts could consider instituting a fellowship program to embed this expertise in the judiciary. TechCongress is one model for this type of program in the legislative branch, placing technologists in congressional offices to build institutional capacity on technical issues. A similar model could bring cost-benefit expertise directly into judges’ chambers, or provide it as a pooled, courtwide resource that judges could draw from in individual cases.

Congressional research

The Congressional Research Service should publish a report on judicial cost-benefit analysis in the context of state AI laws, covering methods, best practices, and approaches for integrating qualitative factors.

Research funding

The government should fund research on judicial cost-benefit methodology, including work that develops and tests frameworks judges could apply in dormant Commerce Clause cases. Funding could come through NSF grants for interdisciplinary research at the intersection of law and economics, NIST-sponsored applied research programs, or judicial branch initiatives such as those administered by the Federal Judicial Center.

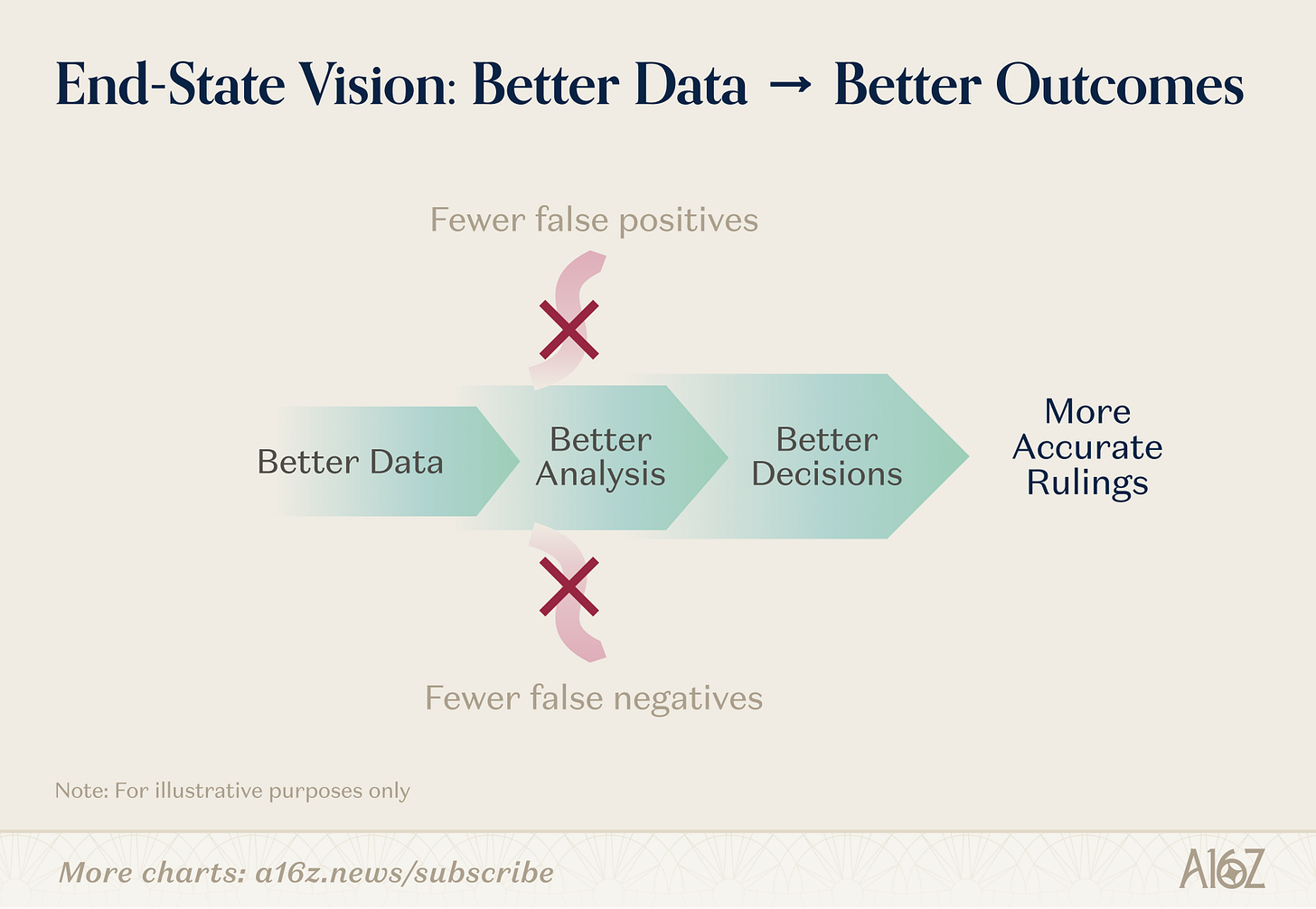

Better data, better decisions

Pike balancing asks courts to answer an empirical question—whether a state law’s burdens on interstate commerce are clearly excessive relative to its local benefits—but gives them almost none of the empirical tools the question demands.

As states accelerate their efforts to regulate AI and the federal government moves to challenge laws it views as unconstitutional, this gap is no longer academic. Courts will be asked to make these determinations, and they will make better ones with better evidence and better analytical frameworks.

The reforms proposed here address both sides of that problem.

Standardized evidentiary statements, ongoing data collection, corporate reporting mechanisms, and experimental programs would build a richer factual record than courts have ever had in dormant Commerce Clause cases.

Statements of interest, judicial education, and technical guidance would help judges make sense of that record.

Together, these reforms would improve not only Pike balancing but the quality of state AI regulation itself because a legislative process that is forced to articulate and defend the costs and benefits of its choices is more likely to produce laws that are worth defending.

This newsletter is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. Furthermore, this content is not investment advice, nor is it intended for use by any investors or prospective investors in any a16z funds. This newsletter may link to other websites or contain other information obtained from third-party sources - a16z has not independently verified nor makes any representations about the current or enduring accuracy of such information. If this content includes third-party advertisements, a16z has not reviewed such advertisements and does not endorse any advertising content or related companies contained therein. Any investments or portfolio companies mentioned, referred to, or described are not representative of all investments in vehicles managed by a16z; visit https://a16z.com/investment-list/ for a full list of investments. Other important information can be found at a16z.com/disclosures. You’re receiving this newsletter since you opted in earlier; if you would like to opt out of future newsletters you may unsubscribe immediately.